The Control Problem, Revisited

My previous article in this series argued that AI interfaces create an illusion of control, that the affordances users interact with, buttons, sliders, approval checkboxes, do not represent the actual architecture of authority inside the system.

What surfaces as a control signal is rarely what governs system behavior. Apparent control and operational authority are not the same thing, and the gap between them is where most AI system failures actually live.

That argument was diagnostic. This one is structural.

If control is an illusion, the question worth asking is not how to restore the feeling of control, but how to design for the conditions that make control meaningful in the first place.

The answer is not better interfaces in the aesthetic sense. It is not cleaner dashboards or more polished status indicators.

The answer is oversight, which is a fundamentally different design problem than interaction.

This distinction matters more as AI systems shift from tools that respond to tools that act.

An AI that completes a draft when asked is easy to evaluate: the output is visible, the action is bounded, and the human has the final word by default.

An AI agent that schedules meetings, sends communications, updates records, and calls downstream services across a multi-hour workflow is doing something categorically different. It is making a sequence of decisions, each of which shapes the next, under partial delegation from a human who is no longer actively watching. The output arrives after the process, not alongside it.

Designing for that kind of system requires rethinking not just what the interface shows, but what structural role the interface plays.

In agentic AI, the interface is no longer primarily where interaction happens. It is where oversight must happen, if it happens at all.

The Oversight Gap

Current interface patterns are not built for oversight. They are built for interaction.

This is not a criticism of design quality. It reflects the design context these patterns were developed in: systems where humans initiate, systems respond, and humans evaluate the response before initiating again.

The feedback loop is tight, the action is discrete, and the human is continuously present in the loop. Interfaces optimized for that pattern emphasize immediacy, legibility, and low-friction interaction. They are designed to minimize the time between intent and output.

Agentic systems break every assumption in that model.

The human initiates once and steps away. The system takes multiple actions over an extended time. The output is the cumulative product of decisions the human did not observe. The feedback loop is not tight; it is often absent until something goes wrong.

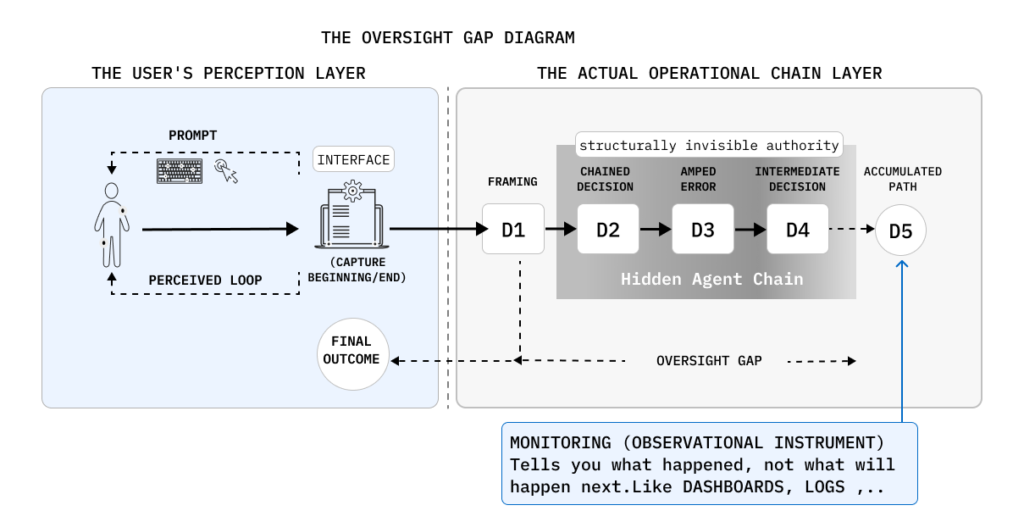

What gets built into these systems instead of oversight infrastructure is monitoring: dashboards that display the current state, activity logs that record what the system did, and status indicators that surface completion or failure.

These are not oversight mechanisms. They are observational instruments. Monitoring tells you what happened. It does not give you the structural capacity to shape what happens next.

The gap is not primarily about information density. Adding more data to a dashboard does not close it.

The oversight gap is structural: it is the absence of mechanisms through which a human can exercise authority over decisions that are in progress, not just evaluate decisions that have already been made. Dashboards show outcomes. Oversight shapes them. These are not points on the same spectrum; they are architecturally different capabilities.

This matters because the point of agentic AI is that it chains decisions. An agent pursuing a goal does not make one choice; it makes a sequence of choices, where each step conditions the next.

Step one sets the frame for step two.

An error introduced at step two is amplified by steps three, four, and five.

By the time output surfaces at the interface, the user is not looking at a decision; they are looking at the collected consequence of a decision path they did not observe and cannot retroactively redirect.

Monitoring lets users see the end of that chain.

Oversight means having structural access to the chain while it is running.

From Monitoring to Oversight

The difference between monitoring and oversight is not one of degree. It is a difference in what kind of authority the human retains.

Monitoring is passive and retrospective. It answers the question: what did the system do? A system with strong monitoring capabilities will have excellent logs, well-organized audit trails, clear output presentation, and legible state indicators. All of these are useful. None of them gives humans the capacity to alter the path the system is on before consequences compound. The human is outside the process, observing its trace.

Oversight is active and prospective. It answers a different set of questions:

what is the system about to do, and do I want it to proceed?

What decision is being made, under what assumptions, with what alternatives available?

Where can I redirect, rather than just stop?

Oversight is not observation of a completed process; it is structural participation in a process that is still unfolding.

The confusion between these two modes has real design consequences. When product teams build monitoring dashboards and call them oversight interfaces, they are solving an easier problem while leaving the harder one unaddressed.

A user who can see that their AI agent sent seventeen emails last Tuesday has information but no oversight. A user who can see that the agent is about to send seventeen emails, understands what conditions triggered that decision, and can modify the scope before execution, that user has oversight.

The distinction also reframes the role of the human in the system. Monitoring positions the human as a reviewer. Oversight positions the human as an authority whose judgment is structurally embedded in the process. The first is compatible with full automation; you can monitor a system you cannot influence. The second is not. Oversight, by definition, requires that human judgment can alter outcomes.

What an Oversight Interface Actually Does

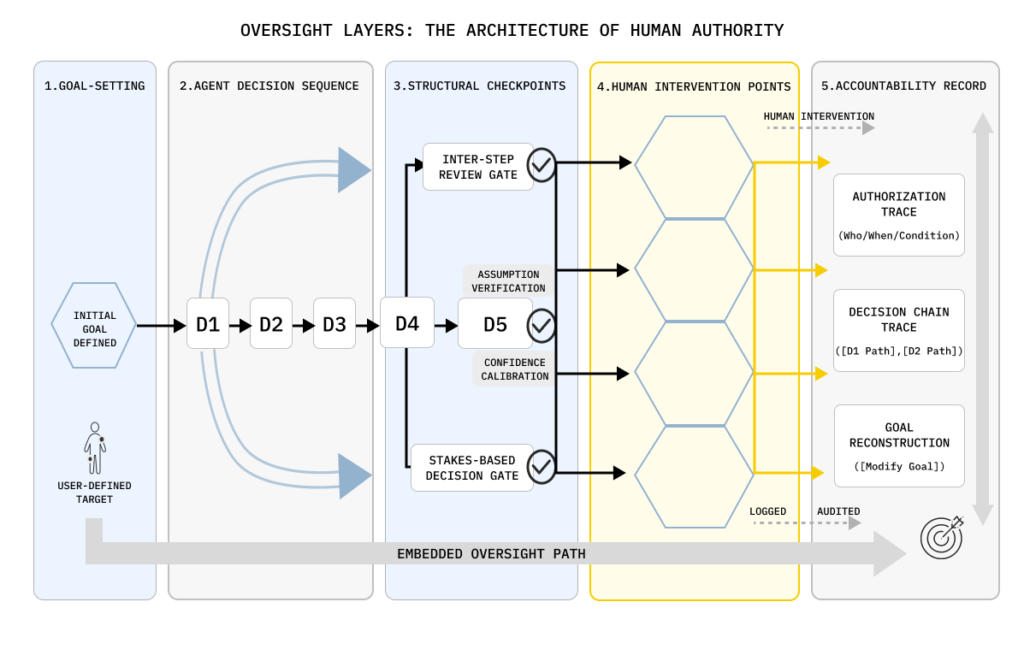

An oversight interface is not a display. It is an architecture. Three structural capabilities define it: visibility into decision logic, not just outcomes; intervention mechanisms that allow redirection, not just termination; and accountability traces that make authority legible after the fact.

Visibility, in this context, means something specific. It is not the display of activity logs or status states. It is the surface-level rendering of decision logic, the system’s current understanding of the goal, the intermediate choices it has made toward that goal, the assumptions it is operating under, and its current confidence about whether those assumptions hold. This is the information that makes meaningful oversight possible. Without it, the human is evaluating outputs without access to the reasoning that produced them. They can approve or reject results, but they cannot assess whether the path that generated those results was appropriate. That is not oversight; it is a post-hoc evaluation with incomplete information.

The practical challenge is that decision logic in agentic systems is not always explicit. Agents compute paths toward goals; they do not follow predefined scripts. What designers must surface is not a step-by-step plan for the system invented in advance, but a running account of what the system understood, what it chose, and why, updated as the process unfolds. This requires systems that are built to expose their reasoning, not just their outputs. It is an architectural requirement, not an interface feature.

Intervention design is where most current oversight interfaces fall shortest.

The default model offers two states: running and stopped. Pause and resume, if available, extend this marginally, but they still frame the choice as binary: the process continues as-is, or it halts entirely.

Neither option addresses the actual need, which is to redirect. A user who identifies a problem mid-process does not necessarily want to stop the system; they want to change its direction. They want to modify the goal, add a constraint, substitute one action for another, or shift the scope of the next step.

None of these requires termination. All of them require a design that treats intervention as a navigational act, not just an emergency brake.

Accountability structures the third layer. Oversight interfaces must make it possible, after the fact, to reconstruct who authorized what, when, under what conditions, and with what information available. This is not about blame. It is about making the distribution of authority legible, which is a prerequisite for improving it. Systems that cannot answer the question “which part of this outcome was the system’s decision and which part was the human’s authorization” cannot be improved in a meaningful way, because cause and effect are untraceable.

Checkpoints and the Architecture of Intervention

The design of intervention points is the most concretely tractable part of oversight interface design, and also the most frequently mishandled.

The instinct is to insert checkpoints frequently, to interrupt the system often, so the human feels engaged. This instinct is wrong in both directions. Too many checkpoints produce automation bias through a different mechanism than too few: users who are constantly interrupted at low-stakes moments learn to approve without evaluating. The checkpoint becomes a confirmation habit rather than a judgment moment. The form of oversight is preserved while the substance is eliminated. When a genuinely consequential checkpoint arrives, it receives the same automated approval as the routine ones that preceded it.

Checkpoints must therefore be designed to match the decision structure of the task, not the comfort level of the user. The operative question is not “how often should we interrupt?” but “at which points in the decision chain does the choice made here materially alter the range of outcomes still available?” Those are the intervention points. Everything else is noise.

What makes a decision point appropriate for human intervention is a combination of irreversibility, uncertainty, and scope. Actions that cannot be undone, messages sent, records modified, and resources committed, warrant a pause before execution, not after. Actions the system takes with low confidence, where the gap between its best guess and its next-best guess is narrow, should surface that uncertainty explicitly rather than proceeding with false confidence. Actions that fall outside the parameters of previously validated behavior, where the system is extrapolating rather than executing, require human judgment because the system’s reliability in that space is structurally unknown.

The design of what to show at a checkpoint matters as much as the placement of the checkpoint itself. A pause that presents the user with “the system is about to proceed, continue, or stop?” is not oversight. It is a gate without information. What is needed at each intervention point is: what the system understands the goal to be at this moment, what it is about to do, what alternatives exist, and what would change if the user redirected. This is the information that makes the intervention meaningful. Without it, the human is approving a process they cannot evaluate.

Irreversibility deserves special design attention. The asymmetry between actions that can be undone and actions that cannot is one of the most consequential structural features of any agentic workflow, and it is rarely surfaced explicitly. Designing for oversight means making this asymmetry visible before the irreversible action is taken, not as a warning label, but as a structural pause that gives the human enough information and enough time to decide whether to proceed. Speed, in this context, is the enemy of oversight. Optimizing for execution velocity in agentic systems is a design decision to reduce the window for human judgment, whether or not it is recognized as such.

Designing for Judgment, Not Control

There is a persistent confusion in oversight interface design between giving users control and supporting human judgment. These are related but not equivalent, and conflating them leads to interfaces that provide the form of control without the conditions that make judgment possible.

Control, in the narrow sense, means the user can affect the system’s state: start, stop, configure, or override. An interface with strong control affordances gives the user many ways to alter what the system does. But control without judgment is not oversight; it is authority without the information needed to exercise it well.

The user can stop the system, but they do not know whether stopping it is the right response to the condition they are observing. They can override the system’s decision, but they do not understand what that decision was based on, so they cannot evaluate whether their override improves the outcome.

The conditions for judgment are different from the conditions for control. Judgment requires context: what situation does the system think it is in, what alternatives did it consider, what would have happened differently if the user had specified the goal differently. It requires meaning, not just data, not raw logs, but an account of what the logs signify for the task at hand. And it requires time: a structural pause sufficient for the human to form a considered view before the action is committed.

The insight that humans need a thought partner capable of asking clarifying questions, not just a system that passively executes, applies directly to oversight design. An oversight interface that only shows the system’s output and asks “approve or reject?” does not support judgment.

It is asking the human to ratify a black box. An oversight interface that explains the decision logic, identifies the assumptions the system made, and asks the human whether those assumptions are valid, that is a design that treats human judgment as something that needs to be engaged, not just consulted.

This has implications for how oversight interfaces present uncertainty. Systems that surface only confident outputs, that present their results without indicating the degree of uncertainty underlying them, systematically undermine human judgment by hiding the conditions under which judgment is most needed.

When a system is confident, oversight is less urgent. When a system is operating at the edge of its reliable range, human judgment is structurally necessary. But if the interface presents both cases with the same visual authority, the human has no basis for allocating their attention appropriately. Oversight interfaces must make uncertainty a first-class information type, not something that disappears into the polished surface of the output.

Design Principles for Oversight Architecture

These are not best practices in the conventional sense. They are structural commitments, design decisions that determine whether an oversight interface is genuinely capable of supporting human authority, or whether it provides the appearance of oversight while the real authority resides elsewhere in the system.

Surface decision logic, not just outputs. An oversight interface that shows users what the system did without showing them why it did it gives users no basis for evaluating the decision. Visibility into the system’s reasoning, what it understood the goal to be, what it considered and rejected, and what it assumed, is not a transparency feature. It is the precondition for oversight.

Design for redirect, not just termination. Stopping and pausing are necessary but insufficient. Users who can only halt a process that is going wrong are in a weaker position than users who can alter its direction. Oversight interfaces must make redirection a first-class affordance, the ability to modify scope, substitute actions, adjust constraints, or shift goals without forcing the user to restart from zero. The control palette determines the shape of oversight; a binary palette produces binary oversight.

Treat irreversibility as a design primitive. Every action in an agentic workflow is either reversible or it is not, and this distinction has more consequences for oversight design than almost any other property of the action. Irreversible actions require structural pauses, richer information presentation, and meaningful confirmation, not as friction for its own sake, but because the asymmetry between “undo” and “cannot undo” is one that the system understands and the user must be helped to understand as well.

Expose uncertainty before it becomes error. Oversight is most valuable before the moment of failure, not after it. Systems that communicate their confidence levels, flag when they are operating outside previously validated ranges, and distinguish between high-confidence and low-confidence decisions give humans the opportunity to intervene at the point of maximum leverage, before the uncertain decision compounds into a consequential outcome.

Design checkpoints to scale with action magnitude. Not all decisions warrant the same degree of interruption. Routine, low-stakes, easily reversible actions can proceed with minimal friction. Decisions that are high-magnitude, low-confidence, or irreversible should attract proportionally more structural attention, more visible presentation, richer context, slower confirmation, and clearer alternatives. The checkpoint architecture should encode the system’s own assessment of when human judgment is most needed, not just provide uniform gates at predefined intervals.

Maintain accountability as an ongoing trace. The audit trail is not an afterthought; it is part of the oversight structure itself. Interfaces that record what the system did, what information the human had available at each decision point, and what the human authorized (or failed to intercept) create the conditions for meaningful accountability. This enables not just retrospective review but prospective improvement: patterns in where oversight failed become visible, and the design can adapt accordingly.

The Interface as the Location of Authority

This series has argued, across three articles, that the interface is not the system. What appears in the interface, the affordances, the outputs, the controls, does not fully represent the architecture of the system behind it, and treating the interface as if it did produces predictable failures: misplaced trust, abandoned oversight, diffuse accountability.

The argument in this article is a corollary: if the interface is not the system, it must nonetheless be where authority is made visible.

Agentic systems do not wait for users to stay engaged. They pursue goals, chain decisions, and commit consequences across time horizons that outlast user attention. The conditions for meaningful human oversight of such systems, structured intervention points, visible decision logic, explicit uncertainty, and accountable traces do not emerge automatically from the capability of the underlying AI. They must be designed.

An oversight interface is not the same as a control panel. It is not a dashboard with more features. It is an architectural commitment to positioning the human as an authority inside the process, not just a reviewer of its outputs. The interface is not where the system lives. But it is where human authority either takes form or dissolves into the illusion that it already has.

*This is the third article in the AI Systems & Interface Design series. The series draws on frameworks developed in “*The Interface Is Not the System” book.