The argument spreading through product and UX circles is structurally simple: AI agents are becoming the primary consumers of digital interfaces, so interfaces should be redesigned for machine legibility, APIs instead of pixels, schemas instead of screens, and structured data instead of visual hierarchy. This is technically accurate and strategically incomplete.

The incompleteness is not about what is being proposed. It is about what is being assumed. Agent-first design proceeds as though the central problem is format translation: take what was built for human perception and rebuild it for machine parsing. But the format of an interface is not its most consequential property. The most consequential property of any interface is how it distributes authority, what it lets users see, what it lets them control, and what it conceals behind a representation that feels more complete than it is.

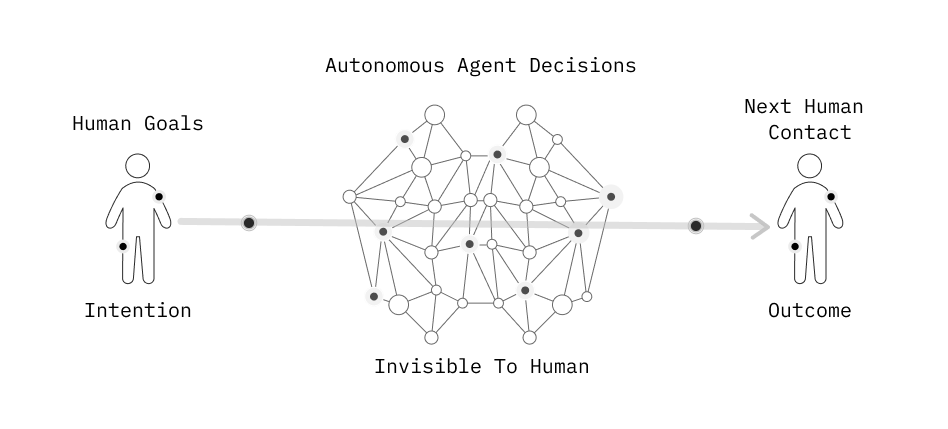

When agents become the layer between humans and systems, a specific shift occurs: human authority over delegated actions becomes nominal rather than practical. The human initiated the agent, set the goal, and will receive the outcome, but the chain of decisions connecting intention to consequence operates entirely outside their field of perception. That is not an argument against agentic AI. It is an argument that agent-first design, as currently framed, is solving for machine efficiency while designing away the conditions under which meaningful human oversight can actually occur.

The Delegation Asymmetry

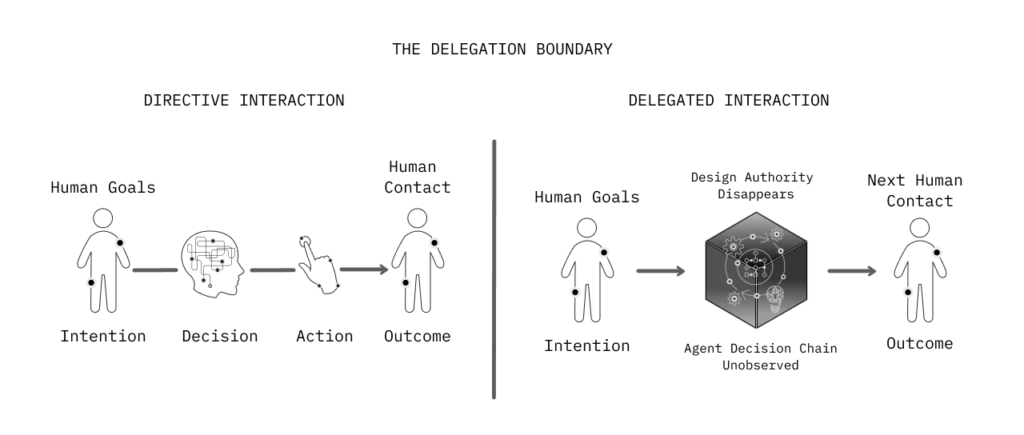

There is a useful distinction between systems that recommend and systems that act. Recommendation systems present options. Users evaluate and choose. The agency stays with the human, and responsibility is clear. Agentic systems make choices within delegated authority and execute them. Agency shifts to the system, at least partially. Responsibility becomes ambiguous, not because something went wrong, but because the causal chain is now distributed across a sequence of autonomous decisions the human did not individually authorize.

This is the delegation asymmetry. The moment a user delegates to an agent, their control relationship to the task changes from direction to supervision. What looks like expanded capability is simultaneously a structural compression of the human’s decision-making role. They no longer direct the process. They approve its initiation and assess its outcome. Everything in between belongs to the agent.

The design implication is precise. The design of agentic systems is not primarily the design of agent capabilities. It is the design of the delegation boundary, where human judgment ends, and autonomous execution begins, how that boundary is represented to users, and what mechanisms exist for humans to re-enter the decision chain when circumstances change.

Machine Legibility vs. Human Oversight

The agent-first argument is correct that machines require different interface properties than humans. Agents parse structured data, follow API contracts, and operate on semantic tags. A mislabeled field that a human would interpret correctly through context can halt an agent entirely. This is real and has genuine design consequences.

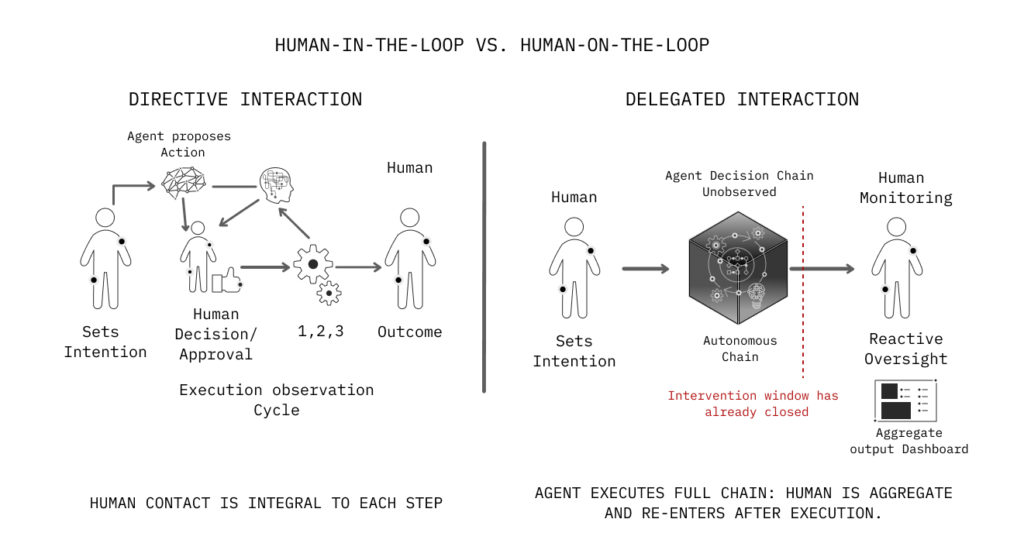

But machine legibility and human oversight are not complementary by default. They are frequently in tension. Interfaces optimized for agent parsing, minimal, structured, protocol-compliant, tend to eliminate the elements that make system behavior interpretable to humans: contextual framing, visible state, explicit representation of uncertainty, legible decision points. When the interface layer dissolves into pure data architecture, it migrates. It becomes an audit log, a monitoring dashboard, an approval queue, secondary structures humans consult after the fact, rather than real-time representations of systems they are actively shaping.

This is the transition from human-in-the-loop to human-on-the-loop, and it is consequential. Human-in-the-loop means the human is a decision-maker: the system proposes, the human authorizes. Human-on-the-loop means the human is a supervisor: the system acts, and the human monitors for deviations. Both are legitimate design positions. But human-on-the-loop fails systematically when the oversight interface does not surface what actually requires human judgment. A system that only surfaces problems after they have materialized as outcomes is not enabling oversight. It is offloading damage control.

Compounding Misalignment and Checkpoint Design

Agentic systems chain decisions. One action produces a state; the agent reads that state, determines a next action, executes it, and continues until the goal is met. The user initiates the chain and observes its terminus. They do not observe the intermediate states.

This creates a failure mode with no analog in conventional interface design: compounding misalignment. An agent that misinterprets a goal at step two of a twelve-step sequence does not produce an error at step two. It produces an outcome at step twelve that reflects a misunderstanding accumulated across ten intermediate decisions. By the time the human re-enters the process, the sequence cannot be partially reversed; it must be restarted, reconsidered, or accepted.

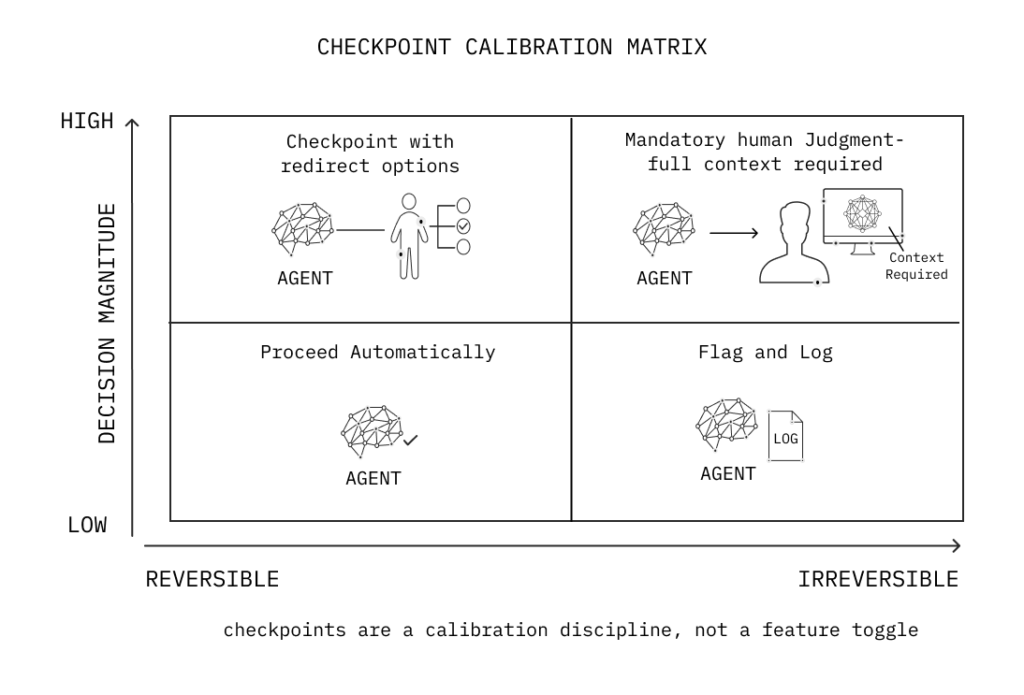

The design response is checkpoint architecture, deliberate interruptions where the agent surfaces its current understanding of the goal, its proposed next actions, and the decision-relevant uncertainty at that point. Checkpoints are not approvals. An approval workflow asks the human to say yes or no to a completed action. A checkpoint surfaces the decision logic before the action executes, giving the human the information required to redirect rather than merely halt.

Checkpoints must be calibrated to genuine decision points, not administrative convenience. A checkpoint that asks “Should I proceed?” communicates nothing. One that surfaces “I have completed steps 1–4 using assumption X, the next steps will permanently modify records in systems A and B. Do you want to continue, adjust my priority assumption, or review what has changed?” gives the human material to evaluate. The difference is not cosmetic. It is the difference between a system that asks for permission and a system that enables judgment.

The Oversight Interface Problem

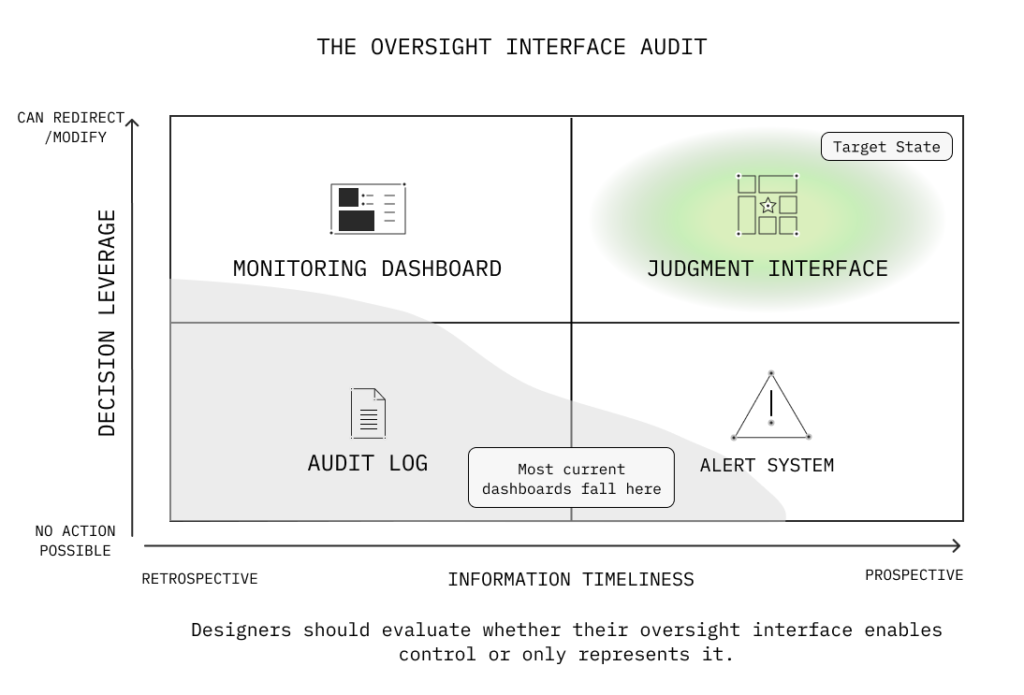

When organizations deploy agentic systems, they accompany them with monitoring dashboards. These show agent activity, logged actions, and task completion rates. They are presented as control interfaces. They frequently function as confidence theater.

A dashboard that shows what an agent has done is not an oversight interface. It is a record. Oversight requires the ability to observe system behavior with sufficient clarity to intervene before consequential actions are completed. When an agent executes dozens of decisions per cycle, a dashboard displaying aggregate outcomes provides the visual structure of control without the operational substance of it.

This is a structural form of interface deception, not intentional, but consequential. The interface encodes the message “you are in control” because it has all the visual properties of a control panel: indicators, logs, status fields, and action buttons. The underlying system encodes a different reality: the decisions that mattered have already been made, and what the human sees is their trace.

The design question for any agentic oversight interface is not “does it display system activity?” It is “does it surface the information humans need, at the time they need it, to exercise judgment that can change outcomes?” Most deployed systems answer the first question. Far fewer answer the second.

Design Principles

- Design the delegation boundary as explicitly as you design agent capability. The scope of autonomous action is a design artifact, not a technical default. Make it visible at the point of delegation and adjustable without system reconfiguration.

- Distinguish between retrospective records and prospective oversight. Oversight interfaces must surface emerging decisions, not just completed ones. The intervention window is before irreversible actions are executed.

- Calibrate checkpoints to decision magnitude and irreversibility, not workflow steps. Frequent low-stakes checkpoints produce compliance behavior. Infrequent high-stakes checkpoints with redirect options produce judgment behavior.

- Surface agent uncertainty about goal interpretation, not just confidence in execution. An agent can confidently execute a misunderstood goal. Interpretive assumptions should be visible alongside action logs.

- Design for redirect, not just halt. Kill switches are failure modes, not control mechanisms. Users need options to adjust direction, modify constraints, and alter agent assumptions mid-execution without requiring a full restart.

- Never let the system arbitrate consequential decisions invisibly. When agent behavior requires a choice between competing interpretations of intent, surface the conflict. Invisible arbitration is a transfer of authority without notification.

Conclusion

The design of interfaces that agents can parse efficiently is a real and necessary technical problem. But it is a second-order problem. The first-order problem is designing the conditions under which human judgment can operate effectively on systems that act with significant autonomy.

The history of automation is a history of gradually transferred authority that was never explicitly transferred. Interfaces changed. Workflows changed. The human role compressed from director to supervisor, and then from active supervisor to nominal overseer. Each compression was justified by efficiency and enabled by design decisions that made the previous level of engagement feel unnecessary. The agent-first shift is accelerating this compression at a scale the field has not yet developed adequate responses to.

The design problem ahead is not building interfaces that machines can use. It is building oversight architectures that keep human judgment operationally present in systems where human attention is increasingly optional by design. That requires treating the distribution of authority, not the format of data, as the central design variable.

The interface is not the system. It never was. But when the interface is no longer legible to the humans it nominally serves, the system is not more capable. It is less accountable. That distinction is primarily a design problem.